When you’re a company that’s disrupting nearly everything it touches, anything you do is reported almost instantaneously in the media. And that attention is amplified when you take down the websites of some of the world’s most known tech brands for 5 hours or so. That’s what happened to Amazon Web Services on Tuesday, February 28, 2017.

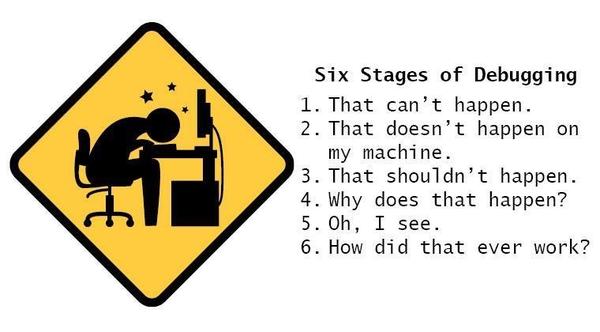

The effects of the outage have been discussed to shreds, but it now turns out that a simple mistake in a command given during a debugging run of its billing system was the root cause of the outage.

The wrong command didn’t actually cause the outage, but it took down the wrong S3 servers, forcing a full restart. Considering the scale at which AWS servers operate, that took a few hours – hence, the outage.

The fact that a wrong command can execute the wrong operation is understandable. After all, that’s where human error plays a role. The interesting thing is that Amazon is now going to make changes to the system so incorrect commands are not able to trigger an outage like this one.

We don’t know what those changes are other than the fact that capacity removal is being capped (the allowable limit was too high) and recovery times are greatly reduced, but will that prevent another outage from ever happening? Hardly. It’s just one hole that’s being plugged.

What we need to understand is that any technology can be broken. IBM says that cloud can be made more secure than the static security layers typically found in traditional infrastructure, but who’s going to account for human error, as was clearly the case in the AWS outage?

Thanks for reading our work! We invite you to check out our Essentials of Cloud Computing page, which covers the basics of cloud computing, its components, various deployment models, historical, current and forecast data for the cloud computing industry, and even a glossary of cloud computing terms.