Microsoft recently released Windows 10 Insider Build 16257, and it brings a major new feature that’s been in the works for the past three years – Eye Control.

The feature is essentially an accessibility function that tracks a user’s eye movements and converts them into commands on Windows 10. Users with disabilities such as ALS, which often only leaves the eye muscles unaffected, can now use compatible eye-tracking/gaze-tracking hardware and control their Windows 10 machines using a virtual, on-screen keyboard and mouse.

Eye Control is still in beta, but it’s been developed over a three-year period since the 2014 One Week hackathon, when former NFL player Steve Gleason, affected with ALS, challenged Microsoft’s creative team to create a useful product that could be used by ALS sufferers.

The result of that was the Eye Gaze Wheelchair, an accessibility product that could be controlled by looking at commands on a screen.

That same technology is now available on Windows 10 in the form of Eye Control, and allows users to perform tasks that would normally require a physical keyboard and mouse. Support for the first beta of Eye Control is limited to an EN-US keyboard layout, but the company is working on expanding support to other layouts and languages.

To use Eye Control, you’ll need to have an Insider account, after which you’ll need to install Windows 10 Build 16257. You’ll also need compatible hardware, and Microsoft suggests the Tobii Eye Tracker 4C, originally intended for gaming.

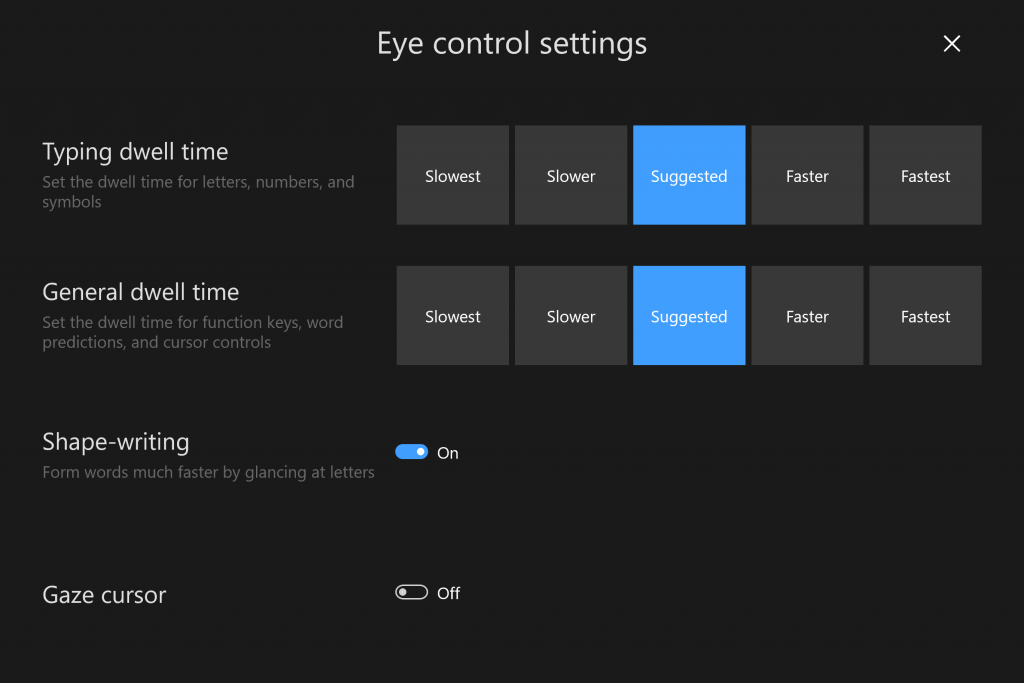

One of the interesting features of Eye Control is shape-writing. If you’ve ever used the swipe to type function on a virtual keyboard, that’s exactly what this is. Instead of a finger swipe from key to key, it tracks the user’s gaze. It comes with the same word prediction functionality as most virtual keyboards.

For the full history on how Eye Control was developed, please read Microsoft’s post here.

Thanks for visiting. Please support us on social media: Facebook | Twitter