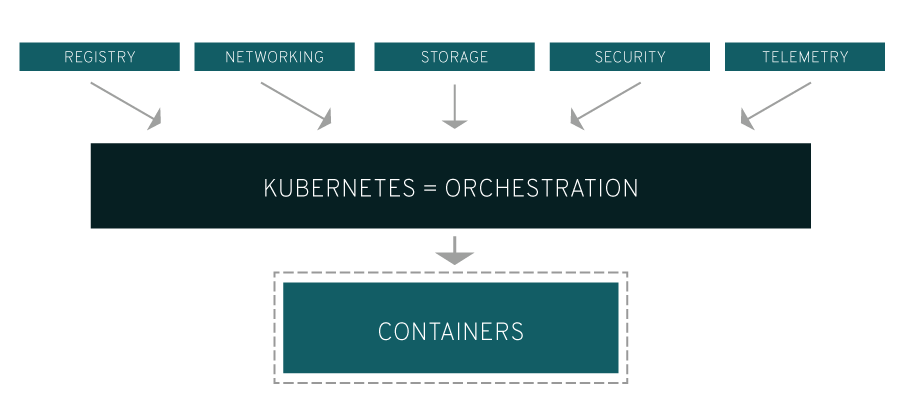

Kubernetes is an open source framework widely used for orchestration and management of containerized workloads and services. Ideal for cloud native-applications, Kubernetes automates several processes involved in the deployment and scaling of containerized applications. Kubernetes also enables portability across infrastructures.

Kubernetes can be viewed as a container platform or as a microservices platform or as a highly portable cloud platform.

The name Kubernetes originates from Greek, which means Pilot.

Kubernetes Key Features:

-

Service discovery and load balancing

-

Storage Orchestration

-

Automated rollouts and rollbacks

-

Automatic binpacking

-

Self-healing

-

Configuration management

Started by Google as an internal project, Kubernetes was open sourced by the search engine giant in 2014, and is now maintained and promoted by Cloud Native Computing Foundation

“Kubernetes was originally developed and designed by engineers at Google. Google was one of the early contributors to Linux container technology and has talked publicly about how everything at Google runs in containers. (This is the technology behind Google’s cloud services.) Google generates more than 2 billion container deployments a week—all powered by an internal platform: Borg. Borg was the predecessor to Kubernetes and the lessons learned from developing Borg over the years became the primary influence behind much of the Kubernetes technology.” – Redhat

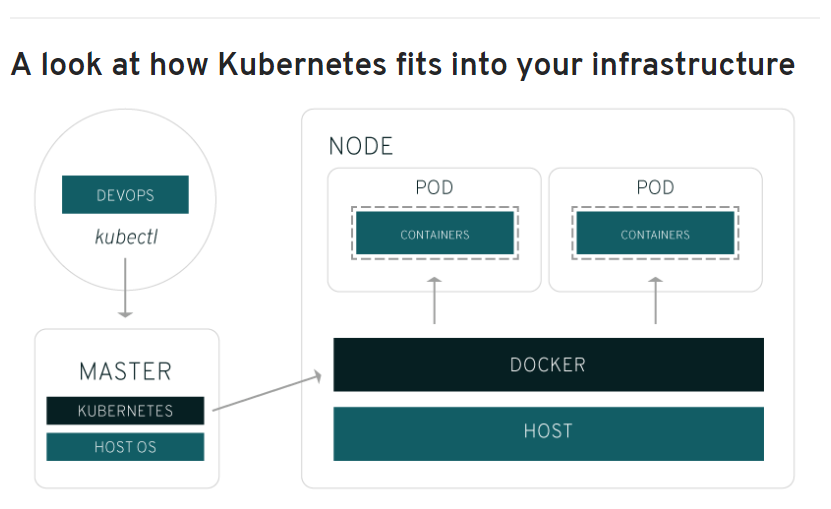

“A Pod is the basic building block of Kubernetes–the smallest and simplest unit in the Kubernetes object model that you create or deploy. Pod represents a running process on your cluster.”

Image Source: Redhat

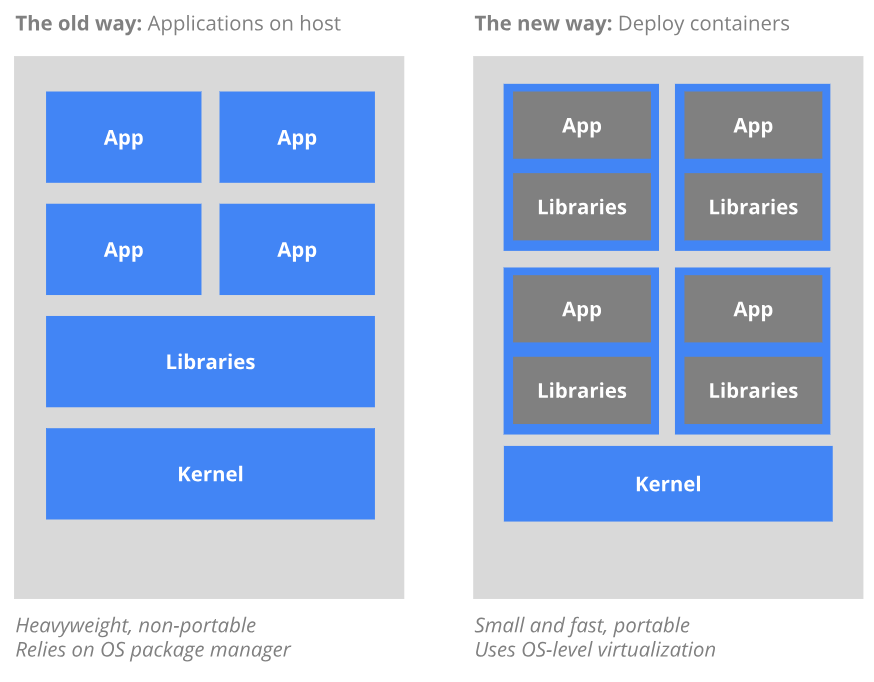

What is a Container?

“Container is a standard unit of software that packages up code and all its dependencies so the application runs quickly and reliably from one computing environment to another.”

Similar to how containers were used by the shipping industry to standardize movement of goods across the world, Containers (IT) aim to achieve the same impact for the Technology industry by standardizing the (software) application environment. Containers provide virtualization at the operating-system level instead of the traditional hardware-level virtualization.

Since Containers are isolated from each other and also from the host, computational resources can be shared, due to which they are easier to build than a virtual machine. Containers are not dependent on the underlying infrastructure, as well as the host file system, which makes them highly portable across infrastructure and operation system distributions.

Why do you need Kubernetes?

A real-world production application will run across several containers and these containers may be deployed across multiple hosts. This makes security and management of containers a complicated task.

Kubernetes automates several manual processes required to deploy and scale containers, and manage containerized workloads. The container-centric management environment offered by Kubernetes can be used to orchestrate computing, networking and storage infrastructure.

What can you do with Kubernetes?

Kubernetes gives you the platform to schedule and run containers on clusters of physical or virtual machines. With Kubernetes you can implement a container-based infrastructure in production environments.

With Kubernetes you can:

-

Orchestrate containers across multiple hosts.

-

Make better use of hardware to maximize resources needed to run your enterprise apps.

-

Control and automate application deployments and updates.

-

Mount and add storage to run stateful apps.

-

Scale containerized applications and their resources on the fly.

-

Declaratively manage services, which guarantees the deployed applications are always running how you deployed them.

-

Health-check and self-heal your apps with autoplacement, autorestart, autoreplication, and autoscaling.

Kubernetes Components:

The components used in Kubernetes are classified as Master Components, Node Components, and Addons.

Master Components:

These components form the cluster’s control plane, make global decisions about the clusters.

-

kube-apiserver: Exposes the Kubernetes API. It is the front-end for the Kubernetes control plane, which is designed to scale horizontally.

-

etcd: Consistent and highly-available key-value store.

-

kube-scheduler: Component on the master that watches newly created pods that have no node assigned, and selects a node for them to run on. Factors taken into account for scheduling decisions include individual and collective resource requirements, hardware/software/policy constraints, affinity and anti-affinity specifications, data locality, inter-workload interference and deadlines

-

kube-controller-manager: Component that runs controllers . Logically, each controller is a separate process, but to reduce complexity, they are all compiled into a single binary and run in a single process.

-

cloud-controller-manager: runs controllers that interact with the underlying cloud providers. The cloud-controller-manager binary is an alpha feature introduced in Kubernetes release 1.6.

Node Components

These components run on every node and provide the Kubernetes runtime environment.

-

kubelet: An agent that runs on each node in the cluster. It makes sure that containers are running in a pod. The kubelet takes a set of PodSpecs that are provided through various mechanisms and ensures that the containers described in those PodSpecs are running and healthy. The kubelet doesn’t manage containers which were not created by Kubernetes

-

kube-proxy: enables the Kubernetes service abstraction by maintaining network rules on the host and performing connection forwarding

-

Container Runtime: is the software that is responsible for running containers.Kubernetes supports several runtimes: Docker, containerd, cri-o, rktlet and any implementation of the Kubernetes CRI (Container Runtime Interface)

You can check the full list of addons by visiting kubernetes.io

Container vs Virtual Machines?

“Containers can run all kinds of applications, but because they are so different from virtual machines, a lot of the older software that many big companies are still running doesn’t translate to this model, writes Frederic Lardinois of Techcrunch. He adds further explaining why Containers will not replace Virtual machines.

“Virtual machines can help you move those old applications into a cloud service like AWS or Microsoft Azure, however, so even though containers have their advantages, virtual machines aren’t going away anytime soon.