AWS recently released a service called AWS DL Containers aimed at deep learning researchers. The service involves pre-configuring and validating Docker images and then pre-installing them with deep learning frameworks that can be used to deploy custom ML environments in a rapid manner.

AWS DL Containers currently support Apache MXNet and TensorFlow, but will keep adding more frameworks like Facebook’s PyTorch. Unveiled at the Santa Clara AWS Summit in March 2019, DL Containers can be used for inferencing and training purposes. They’re the company’s response to EKS and ECS users’ call to AWS to create a simple way to deploy TensorFlow workloads to the cloud.

Support for more services will follow, per AWS Chief Evangelist Jeff Barr. He also said that the images would be made available for free, and can be used pre-configured, or customized to suit the needs of the workload by adding packages and libraries.

There are several types of AWS DL Containers available, all of which are based on combinations of the following criteria:

- Framework – TensorFlow or MXNet.

- Mode – Training or Inference. You can train on a single node or on a multi-node cluster.

- Environment – CPU or GPU.

- Python Version – 2.7 or 3.6.

- Distributed Training – Availability of the Horovod framework.

- Operating System – Ubuntu 16.04.

How to Use Amazon Deep Learning Containers

The setup part is fairly simple. In the example shown by Barr, the user creates an ECS cluster with an instance like p2.8xlarge, as shown below:

$ aws ec2 run-instances –image-id ami-0ebf2c738e66321e6 \

–count 1 –instance-type p2.8xlarge \

–key-name keys-jbarr-us-east …

Next check that the cluster is running and that the ECS Container Agent is active, and create a text file for task definition:

{

“requiresCompatibilities”: ,

“containerDefinitions”: ,

“entryPoint”: ,

“name”: “EC2TFInference”,

“image”: “841569659894.dkr.ecr.us-east-1.amazonaws.com/sample_tf_inference_images:gpu_with_half_plus_two_model”,

“memory”: 8111,

“cpu”: 256,

“resourceRequirements”: ,

“essential”: true,

“portMappings”: ,

“logConfiguration”: {

“logDriver”: “awslogs”,

“options”: {

“awslogs-group”: “/ecs/TFInference”,

“awslogs-region”: “us-east-1”,

“awslogs-stream-prefix”: “ecs”

}

}

}

],

“volumes”: [],

“networkMode”: “bridge”,

“placementConstraints”: [],

“family”: “Ec2TFInference”

}

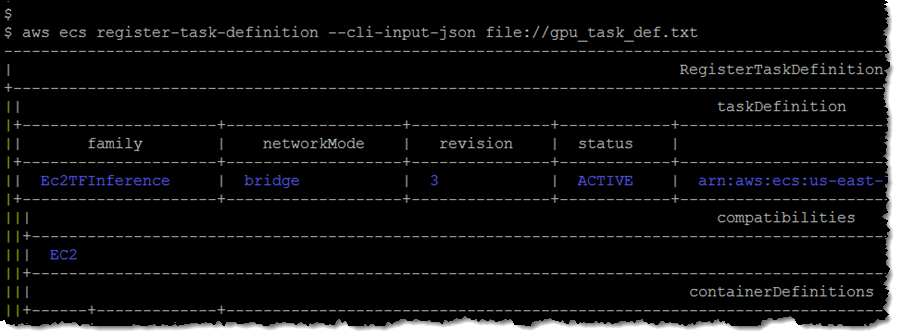

The task definition is then registered using container specs like Mode and Environment, and the revision number is captured. In this case, 3:

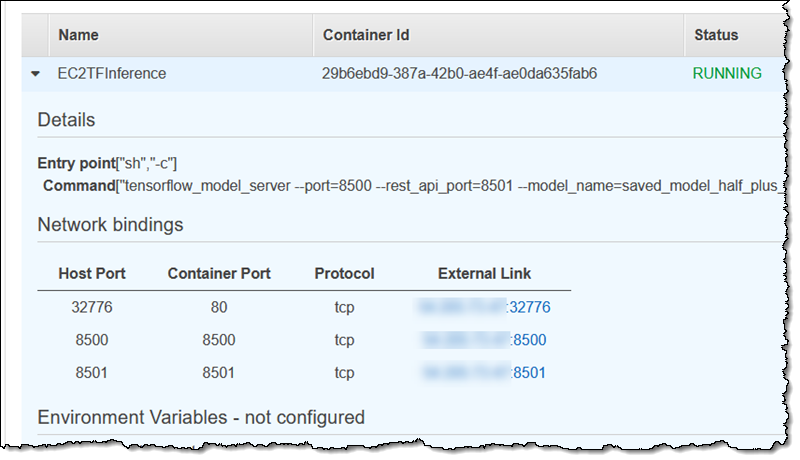

The task definition and revision number are then used to create a service, after which you can navigate to the task in the console and find the external binding for port 8501.

After training the model on the function y=ax+b, with a being 0.5 and b being 2, inferences can be run for 1.0, 2.0 and 5.0 as inputs, and the predicted values will be 2.5, 3.0 and 4.5.

$ curl -d ‘{“instances”: }’ \

-X POST http://xx.xxx.xx.xx:8501/v1/models/saved_model_half_plus_two_gpu:predict

{

“predictions”:

}

The example Barr gave was intended to show users how easy it is to perform inferencing using the new DL Containers service and a pre-trained model. The flexible nature of the service allows users to launch a training model, do the training and then run inferences.

The big cloud players are now introducing ready-to-deploy frameworks and hardware acceleration. Google announced TensorFlow 2.0 alpha for its GCP, and Microsoft and NVIDIA recently announced some key integrations:

“By integrating NVIDIA TensorRT with ONNX Runtime and RAPIDS with Azure Machine Learning service, we’ve made it easier for machine learning practitioners to leverage NVIDIA GPUs across their data science workflows,” according to Kari Briski, Senior Director of Product Management for Accelerated Computing Software at NVIDIA.

AWS Deep Learning Containers is the latest addition to the broad and deep list of services aimed at data scientists and deep learning researchers. They’re available through Amazon ECR at no cost, as is the case with most of their free services that only charge you for resource usage.